Introduction

Financial institutions spend $61 billion annually on compliance in the US and Canada alone—yet banks detect only approximately 2% of global financial crime flows. Global illicit financial activity reached $4.4 trillion in 2025, while payment processors now handle 3.6 trillion transactions annually—a vastly larger attack surface than traditional defenses were built to cover.

Fraudsters have already embraced automation—while most banks remain locked into static, rule-based detection systems that cannot adapt. 69% of banking fraud professionals believe criminals are more advanced at using AI than financial institutions are at fighting it.

Agentic AI sits at the center of this dynamic—used offensively for synthetic identity creation and hyper-personalized social engineering, and defensively to build systems that perceive fraud patterns, make autonomous decisions, and learn continuously. Closing the criminal AI advantage gap starts with understanding how the technology works and where it's already delivering results.

TLDR:

- Rule-based fraud systems generate 90-95% false positives and catch only 2% of global financial crime

- Agentic AI delivers 200-2,000% productivity gains vs. 15-20% from generative AI copilots

- HSBC achieved 2-4x more fraud detection with 60% fewer false positives using agentic AI

- Multi-agent systems automate end-to-end KYC/AML workflows, escalating only high-complexity cases to human reviewers

- Eight in ten companies cite data quality as the primary barrier to scaling agentic AI

Why Traditional Fraud Detection Is No Longer Enough

The Rule-Based System Trap

Traditional fraud detection operates on static, pre-programmed thresholds that require manual reprogramming for every new fraud pattern. If a bank sets a rule to flag transactions over $10,000 from new devices, fraudsters simply structure their attacks around $9,999 transfers.

This cat-and-mouse game is reactive by design. By the time analysts identify a new fraud typology, update the rules, and deploy changes, criminals have already moved on to the next tactic.

Rule-based systems cannot learn or adapt — they process every transaction against the same fixed criteria regardless of context, customer history, or evolving threat landscapes.

The False Positive Crisis

AML transaction monitoring systems routinely generate 90% to 95% false positive rates. Sanctions screening can reach false positive rates as high as 99.5%. This means analysts spend the vast majority of their time investigating legitimate activity—clearing customers who did nothing wrong—while genuine fraud slips through the cracks buried in the noise.

That analyst burden cascades directly to customers. Legitimate transactions get blocked or delayed, cardholders get frozen out of their accounts during travel, and friction compounds. Every false positive erodes trust and adds operational cost without catching a single criminal.

Key consequences of high false positive rates:

- Analysts burn most of their capacity on legitimate transactions, not real threats

- Genuine fraud hides in the noise while queues grow

- Customer trust erodes with each unnecessary block or delay

The Human Capacity Bottleneck

Banks commonly assign 10% to 15% of their total workforce specifically to KYC/AML activities. As digital transaction volumes grow—payments revenue climbed 9% annually since 2019—this staffing model becomes mathematically unsustainable. You cannot hire your way out of exponential transaction growth.

Compliance costs are rising in lockstep. 99% of financial institutions reported compliance cost increases, with 79% seeing technology cost increases specifically for compliance software. Meanwhile, productivity at US banks has fallen by 0.3% per year on average since 2010. More spending, more headcount, and less output — the numbers make the case for a different approach.

What Is Agentic AI — And How Is It Different?

Agentic AI refers to AI systems that act as autonomous or semi-autonomous agents. They set goals, plan tasks, perceive their environment, make decisions, and learn continuously — all with minimal human intervention. Unlike traditional rule-based systems (which follow static logic) or generative AI (which produces content from prompts), agentic AI acts.

The key distinction: agentic AI doesn't just flag suspicious transactions—it investigates them, pulls supporting data from multiple systems, evaluates risk against dynamic behavioral baselines, and can autonomously block transactions, trigger step-up authentication, or draft investigation reports.

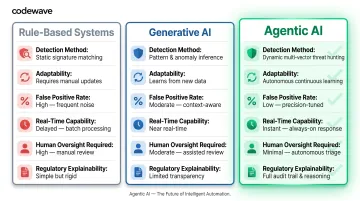

Agentic AI vs. Generative AI vs. Rule-Based Systems

| Dimension | Rule-Based Systems | Generative AI | Agentic AI |

|---|---|---|---|

| Detection Method | Static thresholds and predefined rules | Pattern recognition from training data | Autonomous decision-making with continuous learning |

| Adaptability | Manual reprogramming required | Limited to training data scope | Self-improving from real-world feedback |

| False Positive Rate | 90-95% | Moderate (improves with tuning) | 60% reduction (proven by HSBC) |

| Real-Time Capability | Limited to rule evaluation speed | Inference speed only | Full investigation and action in seconds |

| Human Oversight Required | Alert review for every flag | Copilot assistance, human leads | Human supervises 20+ agents, intervenes only on exceptions |

| Regulatory Explainability | Simple rule trace | Black-box model concerns | Full audit trail per agent action |

Multi-Agent Squad Architecture

Agentic AI systems operate as coordinated squads—multiple specialized agents working together like a digital factory. One agent extracts data from customer documents, another validates information against government registries, a third performs sanctions and PEP screening, a fourth analyzes transaction patterns, and a quality assurance agent reviews the entire workflow before compiling the final report for human sign-off.

This orchestration happens autonomously. Human oversight is reserved for high-complexity exceptions—cases involving unusual jurisdictions, politically exposed persons, or ambiguous ownership structures—typically less than 15-20% of total cases.

The Productivity Leap: 200-2,000%

Generative AI copilots that assist human investigators deliver 15% to 20% productivity gains. Fully autonomous agentic AI agents deliver 200% to 2,000% productivity gains. That's not a marginal gain — it's a different operating model entirely.

In practice, one human practitioner supervises 20 or more AI agents, shifting the role from primary investigator to exception handler and supervisor. Institutions that stop at copilot-level AI capture roughly 10x less productivity than those running full agent squads.

Explainability and Regulatory Compliance

Unlike black-box machine learning models, well-designed agentic AI systems generate complete audit trails for every decision—documenting data sources used, risk reasoning applied, escalation triggers activated, and timestamps for each action. This is critical for regulatory compliance in banking.

The EU AI Act (Regulation 2024/1689) classifies AI systems used for credit scoring and fraud detection as high-risk, requiring transparency, human oversight, and explainability. Similar frameworks are taking shape globally — the OCC, Federal Reserve, and FDIC issued updated guidance in April 2026, while the UK FCA launched its AI Lab and testing sandbox.

Institutions can't bolt compliance on afterward. Audit trails, explainability mechanisms, and human oversight controls need to be built into agentic systems from the start.

How Agentic AI Detects and Prevents Fraud: Core Mechanisms and Use Cases

Real-Time Behavioral Baseline Monitoring

Agentic AI builds individualized behavioral profiles for each customer by analyzing spending habits, device usage, transaction velocity, geographic patterns, login times, and channel preferences. When a deviation occurs—a $15,000 international wire transfer from a new device at 3 AM—the system evaluates risk in context, not against blanket rules.

The agent considers: Is this customer a frequent traveler? Do they regularly make large transfers? Has this device been previously verified? Is the destination country consistent with known business relationships? The evaluation happens in seconds, and the system can autonomously trigger step-up authentication, temporary holds, or fraud alerts based on calculated risk scores—not static thresholds.

AML and KYC Compliance

Agentic AI turns AML and KYC from manual, analyst-heavy processes into automated workflows. One global bank established an AI agent architecture with 10 agent squads, each containing 4-5 specialized agents organized by function: data extraction, government register validation, ownership structure analysis, PEP/sanctions screening, transaction pattern analysis, adverse media screening, and consolidated KYC file compilation.

Real-World Example:

A bank onboards a new corporate client. The agentic KYC factory springs into action:

- Data extraction agents pull information from incorporation documents, shareholder agreements, and beneficial ownership declarations

- Validation agents cross-reference company registration numbers against government databases in multiple jurisdictions

- Ownership analysis agents map complex corporate structures, identifying ultimate beneficial owners across shell companies

- Sanctions/PEP screening agents run all identified individuals and entities against OFAC, UN, EU, and jurisdiction-specific watchlists

- Adverse media agents scan news sources, regulatory filings, and enforcement databases for reputational risks

- QA agents review the compiled dossier for completeness and flag any inconsistencies

- A human compliance officer reviews only the final summary and signs off—or escalates complex cases

The entire process, which previously took 3-4 days of analyst time, now completes in 15 minutes with human intervention only at the final approval stage.

Account Takeover and Identity Fraud Detection

Agentic AI flags account takeover attempts through behavioral anomaly detection. Login from an unrecognized device, sudden credential changes, atypical access times, or rapid-fire transaction attempts all trigger risk evaluation. The system can autonomously prompt step-up authentication (biometric verification, one-time codes) or temporarily lock accounts pending verification—actions that happen in real-time before fraudsters can drain funds.

Synthetic identity detection is another critical capability. Criminals increasingly use AI to fabricate identities that combine real Social Security numbers—often stolen from children or deceased individuals—with fake names, addresses, and employment histories.

Agentic AI cross-references structured data (credit reports, government records) with unstructured data (social media footprints, digital behavior patterns) to spot inconsistencies indicative of fabricated identities. Synthetic identity fraud saw a 60% increase in the UK in 2024, now representing 29% of all identity fraud cases—making automated detection essential.

Transaction Monitoring and Suspicious Activity Reporting

Agentic AI analyzes micro-patterns across multiple channels simultaneously. Monitored channels include:

- Mobile banking, ATM withdrawals, card transactions, wire transfers, and online payments

- Layered transactions — large sums broken into smaller transfers to evade detection thresholds

- Structured transactions — deliberate patterns designed to avoid regulatory reporting requirements

When suspicious activity is detected, agentic AI auto-drafts Suspicious Activity Reports (SARs), compiling transaction histories, flagged patterns, supporting documentation, and regulatory narratives—cutting investigation time from hours to minutes. Human analysts review and approve the reports but no longer build them from scratch.

HSBC's Dynamic Risk Assessment system, co-developed with Google, identifies 2 to 4 times more financial crime than previous methods while achieving a 60% reduction in false positives. The system processes approximately 980 million transactions per month, with analysis time for billions of transactions reduced from several weeks to a few days.

The Dual-Threat Reality

These defensive gains matter because criminals are deploying the same technology offensively. Deepfake incidents in fintech surged 700% in 2023. The FBI warns that criminals exploit generative AI for hyper-personalized spear phishing, fraudulent identification documents, voice cloning for impersonation, and deepfake videos to bypass identity verification. Criminals can buy scamming software and deepfake tools on the dark web for as little as $20.

Banks that delay agentic AI deployment are not simply falling behind on efficiency—they are conceding ground to adversaries who are already running automated, AI-powered fraud operations at scale. The capability gap closes only one way.

Key Benefits of Agentic AI for Fraud Detection in Banking

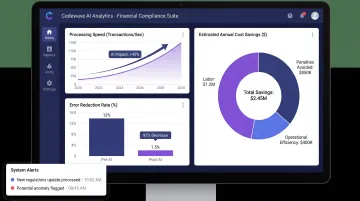

Operational Efficiency and Cost Reduction

Autonomous agent workflows eliminate massive volumes of manual investigation work, freeing compliance teams to focus on high-complexity exceptions. McKinsey's verified research shows agentic AI delivers 200-2,000% productivity gains compared to manual processes—not 20%, but 20x or more in optimized workflows.

One multinational bank achieved an 85% decline in errors and a 97% straight-through processing rate through AI-driven payment process redesign. Another improved productivity by up to 30% and customer experience by 20% using AI-enhanced fraud detection tools.

Codewave has demonstrated similar outcomes for clients, achieving a 60% improvement in data accessibility, 3X faster data processing, approximately 3 weeks saved per month in manual data work, and a 25% reduction in costs through AI-powered data solutions. These outcomes follow from phased deployment and domain-specific model tuning — not out-of-the-box configuration.

Improved Detection Accuracy and Fewer False Positives

Continuous learning from real-world feedback means detection models sharpen over time. HSBC's 60% reduction in false positives represents the strongest verified institutional benchmark—this isn't theoretical improvement, it's proven operational performance.

Reduced false positives mean less customer friction. Legitimate transactions clear faster, cardholders aren't locked out of accounts unnecessarily, and the customer experience improves while genuine threats are caught more reliably. Compliance teams spend less time defending false alarms, and operations teams handle fewer escalations — both direct cost savings.

Scalability and Adaptability to Evolving Threats

Rule-based systems require manual reprogramming for every new fraud typology. Agentic AI learns and adapts to new patterns without human intervention. As attack surfaces expand, the threat landscape keeps shifting. Common fraud typologies now include:

- Card-not-present fraud exploiting e-commerce gaps

- Synthetic identity schemes built from fabricated credentials

- Deepfake-enabled account takeovers bypassing biometric checks

- AI-automated credential stuffing at scale

Agentic systems recognize novel patterns and update detection logic in real time — no manual reprogramming required.

This adaptability matters structurally. With payment volumes growing at 9% annually and criminals deploying their own AI automation, static defenses fall behind fast. Agentic AI compounds its advantage over time, learning from every transaction, every fraud attempt, and every analyst decision.

Implementation Challenges and How to Address Them

Data Quality and Legacy System Integration

Agentic AI requires clean, structured, well-labeled data—but many banks operate fragmented legacy systems with inconsistent data schemas and siloed repositories. Eight in ten companies cite data limitations as the primary barrier to scaling agentic AI.

Start Small, Prove Value First

Leading institutions prove value before scaling. One universal bank ran a four-week pilot with 50+ analysts, codifying data extraction for 50 policy questions and 300 underlying subtasks—validating feasibility and building internal confidence before enterprise-wide rollout.

Start with a defined customer segment (e.g., small business onboarding) or a specific workflow (e.g., sanctions screening) where data is cleanest and impact is measurable.

Data governance investment is a prerequisite, not an afterthought. Organize data sources, create structured metadata, apply strict access rules, and implement validation checkpoints to ensure agents operate only on verified inputs.

Regulatory Explainability and Governance

Regulators increasingly require that automated financial decisions be traceable and defensible. The EU AI Act mandates explainability and human oversight for high-risk AI systems, including fraud detection. The OCC's April 2026 guidance explicitly excluded generative and agentic AI from model risk management frameworks—signaling that specific guidance is forthcoming.

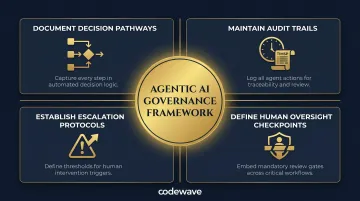

Build Governance In from Day One

Governance built after the fact is expensive to retrofit. Institutions that embed compliance controls from the start should:

- Document decision pathways for every agent interaction

- Maintain audit trails with timestamps and verified data sources

- Establish escalation protocols for edge cases and ambiguous outcomes

- Define human oversight checkpoints — reserved for high-complexity exceptions (under 15-20% of cases), not routine decisions

Institutions that embed explainability now will be positioned when specific agentic AI guidance arrives; those that delay face both competitive disadvantage and regulatory retrofit costs.

Defining Autonomy Boundaries and Ethical Guardrails

Organizations must decide upfront which decisions agents can make autonomously versus which must trigger human review. Examples requiring human oversight: cases involving vulnerable customers (elderly, cognitively impaired), high-stakes outcomes (account closures, large fund freezes), or ambiguous jurisdictional issues.

Set Policy-Aligned Guardrails and Test Against Adversarial Conditions

Establish policy-aligned guardrails that filter unsafe instructions and guide agents toward approved responses. Implement prompt filtering, output validation, and manual override flows for sensitive actions. Conduct adversarial security testing—agentic AI itself can become an attack surface through prompt injection or data poisoning.

Pilot phases should simulate edge cases—not just expected workflows. Testing adversarial inputs, data anomalies, and jurisdictional ambiguities before production deployment reduces both implementation risk and regulatory misalignment. Codewave's QuantumAgile™ methodology structures this process, moving banks from concept to validated deployment with built-in compliance checkpoints at each stage.

Frequently Asked Questions

What is the difference between agentic AI and traditional rule-based fraud detection?

Rule-based systems use static, pre-set thresholds requiring manual updates for every new fraud pattern. Agentic AI learns dynamically, adapts autonomously to emerging threats, and can take preventive actions in real-time—resulting in 60% fewer false positives (proven by HSBC) and broader threat coverage without constant reprogramming.

How does agentic AI reduce false positives in banking fraud detection?

Agentic AI builds individualized behavioral baselines for each customer, evaluating anomalies in context rather than against blanket rules. It refines detection logic continuously from real-world outcomes, learning to distinguish genuine fraud from legitimate unusual behavior—reducing false positive rates from the 90-95% levels typical of traditional systems down to a fraction of that.

Can agentic AI integrate with existing core banking and AML platforms?

Yes. Agentic AI deploys via APIs that connect to existing core banking infrastructure, payment gateways, and compliance platforms (such as NICE Actimize, Oracle FCCM, and SAS AML)—allowing institutions to augment rather than replace current systems and preserve technology investments.

What types of fraud can agentic AI detect in financial services?

Agentic AI detects card fraud, account takeover, synthetic identity fraud, AML/money laundering schemes, insider threats, phishing-linked account compromise, and document forgery—analyzing behavioral patterns across channels that humans and rule-based systems routinely miss.

How does agentic AI maintain regulatory compliance and audit readiness?

Compliant agentic AI systems generate a full decision trail for every agent action—including data sources used, risk reasoning applied, escalation steps triggered, and timestamps for each decision. This makes every action explainable to regulators, auditors, and customers, meeting emerging requirements under the EU AI Act and similar frameworks.

Does agentic AI replace human fraud analysts?

No. Agentic AI augments analysts rather than replacing them—automating high-volume routine monitoring and investigation so human experts can focus on complex exceptions, ethical escalations, vulnerable customer cases, and coaching the AI agent workforce. One human can supervise 20+ agents, increasing organizational capacity without eliminating expertise.