Introduction

US physicians spend a staggering 1.77 hours daily completing documentation outside normal office hours—translating to 125 million hours per year nationwide of after-hours charting work. This isn't just inconvenient; it's a structural crisis driving clinician burnout. Nearly 75% of physicians with burnout symptoms identify the EHR as a direct source, and for every hour of face-to-face patient time, clinicians spend nearly two additional hours on EHR and desk work.

AI clinical documentation is changing this equation. Ambient AI tools capture patient-clinician conversations and convert them into structured clinical notes in the background, putting attention back on the patient where it belongs.

This piece covers how AI fits into clinical documentation, what the evidence shows, and what responsible deployment actually looks like in practice.

TLDR:

- Ambient AI uses NLP and large language models to generate draft clinical notes from patient conversations

- Evidence shows 20-30% reductions in documentation time and significant drops in clinician burnout

- Clinicians always review and approve AI-generated notes before finalizing

- HIPAA compliance depends on BAAs, encryption, and documented patient consent

- Implementation success depends on EHR integration, clinician training, and change management

The Hidden Cost of Clinical Documentation

Documentation overload is not a minor inconvenience—it's a fundamental structural problem in modern healthcare that directly undermines clinical quality and physician wellbeing.

The burnout connection is undeniable:

- Nearly 75% of physicians with burnout symptoms identify the EHR as a source of burnout

- Physicians with insufficient time for documentation are 2.8 times more likely to report burnout

- Those charting 6+ hours weekly at home are 2.43 times more likely to have higher burnout scores

- 69% of primary care physicians believe most EHR clerical tasks don't require a trained physician

These numbers point to a systemic problem — one that shows up in clinical encounters every day.

How Documentation Burden Plays Out in Practice

When workload outpaces capacity, clinicians lose ground in four predictable ways:

- Split attention during consultations: Divided focus between patient and screen reduces eye contact and limits conversation depth. Patients notice — and it affects their perception of care quality.

- "Pajama time" after hours: Half of physicians spend too much time on EHR use at home. Providers handling over 307 inbox messages per clinical FTE weekly are 6 times more likely to report exhaustion than those in the lowest message quartile.

- Copy-paste proliferation: More than 50% of resident progress notes contain duplicated content from previous notes — creating clutter, obscuring current clinical thinking, and carrying inaccurate information forward.

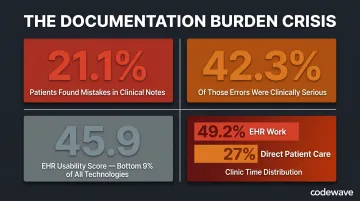

Documentation errors compound all of this. In a survey across 3 US health systems, 21.1% of patients reported finding a perceived mistake in their clinical notes. Of those, 42.3% were considered serious — diagnosis errors, inaccurate medical history, and medication or allergy mistakes being most common. More than 58.9% of the most serious errors were potentially linked to the diagnostic process itself.

EHR adoption improved record-keeping and enabled data exchange — but it also amplified this burden in ways that weren't anticipated. EHR systems average a 45.9 on the System Usability Scale, a score that translates to a grade of F and places them in the bottom 9% of all measured technologies. Google Search, for reference, scores 93.

The time data tells the same story. Clinicians spend 36.2 minutes on the EHR per patient visit. Across the clinic day, EHR work consumes 49.2% of total time — while direct face-to-face patient care accounts for just 27%.

How AI Is Used in Clinical Documentation

AI clinical documentation operates through a multi-layer technology stack that captures, interprets, and structures patient-clinician conversations into usable clinical notes.

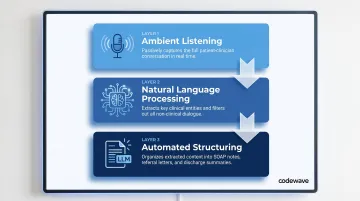

The Three-Layer Technology Stack

Ambient Listening — AI documentation tools use microphones (via smartphone apps, desktop applications, or exam room devices) to passively record the patient-clinician encounter. The system listens throughout the visit without requiring the clinician to type, click, or manually trigger recording for each statement.

Natural Language Processing — Automatic speech recognition (ASR) transcribes the audio, but the critical step is NLP extraction. The AI identifies clinically relevant content (symptoms, dosages, medical history, physical exam findings) and filters out non-clinical small talk, interruptions, and off-topic conversation. This isn't verbatim transcription; it's clinical interpretation.

Automated Structuring — A large language model trained on healthcare data organizes the extracted content into structured note formats: SOAP notes, referral letters, discharge summaries, or billing documentation. The output mirrors the clinician's preferred style and maps directly into EHR fields.

How It Works During a Patient Visit

A clinician activates the ambient AI tool at the start of the encounter, often by tapping a button on a smartphone or desktop app. The tool listens passively as the conversation unfolds. It captures verbal exchanges, identifies clinical entities (diagnoses, medications, lab orders), and distinguishes between patient-reported symptoms and clinician observations.

After the visit, the AI generates a draft note within 30-75 seconds. The clinician reviews the draft via a web editor or directly within the EHR, edits as needed, and finalizes the note. Many tools include "instant auditability" features: clicking on AI-generated text plays the corresponding audio segment, so clinicians can verify accuracy without hunting through a full recording.

The Role of Large Language Models

Healthcare-specific LLMs understand specialty terminology, medical abbreviations, ICD-10 codes, and multi-specialty clinical language that general-purpose AI cannot. These models are trained on de-identified clinical data, structured medical literature, and annotated encounter transcripts.

Important limitation: A study assessing ChatGPT-4's ability to generate SOAP notes from simulated encounters found an average of 23.6 errors per case, with 86.3% omissions and only 52.9% of data elements reported correctly across replicates. At that error rate, an unreviewed AI note could omit critical findings — which is why no AI-generated note should enter an EHR without clinician sign-off.

Clinician Oversight Is Non-Negotiable

AI-generated notes are always drafts. Clinicians review, edit, and approve every note before it's saved to the EHR. The AI handles the scaffolding(organizing dialogue into clinical structure)but the clinician retains full clinical and legal responsibility for the final record.

Two regulatory frameworks reinforce this directly:

- AMA Policy H-480.940: "AI tools or systems cannot augment, create, or otherwise generate records, communications, or other content on behalf of a physician without that physician's consent and final review."

- ONC HTI-1 Final Rule (compliance beginning 2025): EHR vendors must disclose technical information about AI models used in clinical workflows, including training data and risk management processes.

Meeting these compliance requirements while actually improving clinician workflows is where implementation design matters. Codewave builds custom AI documentation tools tailored to specific EHR environments, specialty workflows, and regulatory requirements — rather than adapting clinical teams to off-the-shelf products that weren't built for how they work.

What AI Clinical Documentation Actually Delivers

Time Reclaimed Per Clinician

A multi-site study involving UChicago Medicine found an 8.5% reduction in total EHR time and more than a 15% drop in time spent composing clinical notes, translating to 2-3 minutes saved per patient.

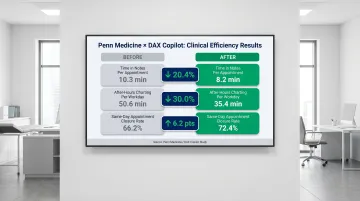

Research at Penn Medicine using DAX Copilot documented even sharper gains:

- Time in notes per appointment decreased from 10.3 to 8.2 minutes (20.4% reduction)

- After-hours "pajama time" dropped from 50.6 to 35.4 minutes per workday (30.0% reduction)

- Same-day appointment closure rate increased from 66.2% to 72.4%

What this time translates into:

- More patient appointments scheduled without extending clinic hours

- Less cognitive fatigue at the end of the workday

- Reduced after-hours charting, restoring work-life balance

- Capacity to address urgent add-on appointments when needed

Improved Patient Experience

When clinicians stop typing during consultations, patients report feeling more heard and included in care decisions. UChicago Medicine reported "modest but steady improvements" in patient experience scores, with clinicians using ambient AI most frequently seeing the largest gains. Qualitative findings included longer eye contact, sharper follow-up questions, and more personalized visits.

Clinicians also reported having more energy and compassion to give patients—replacing screen-heavy encounters with genuine therapeutic conversations.

Reduced Physician Burnout

A study at University of Kansas Medical Center using Abridge found:

- 67% agreed the tool reduced their burnout risk due to documentation

- 64% agreed it increased job satisfaction

- 73% agreed it decreased time documenting outside clinical hours

At Penn Medicine, documentation burden scores dropped dramatically:

- Burden preventing work-life balance: from 6.48 to 2.84 (on a 7-point scale)

- Feeling "drained" by documenting: from 6.17 to 3.08

- Feeling mentally overloaded: from 5.72 to 2.32

Self-reported clinician burnout in the UChicago study dropped from approximately 52% to 39%.

Higher Documentation Quality and Fewer Errors

AI-structured notes are more consistent and complete, reducing missed findings, ambiguous entries, and omitted allergy information.

Measured outcomes across two major health systems illustrate this clearly:

| Metric | Baseline | Result |

|---|---|---|

| Note draft generation time (KUMC) | 76 seconds | 38 seconds |

| Odds of completing note before next patient (KUMC) | Baseline | 4.95× higher |

| Clinicians agreeing ≥1 more urgent patient could be added (KUMC) | — | 47% |

| Total note characters per week (Penn Medicine) | Baseline | +20.6% |

Some clinicians at Penn Medicine noted that AI-generated drafts were "wordy" or used "layman's terms" that required editing, pointing to the need for model customization and adequate clinician training before deployment.

Downstream Operational Benefits

More accurate clinical documentation improves coding accuracy, lowers claim denials, and improves audit readiness. These gains come with a caveat worth flagging. A policy brief in npj Digital Medicine raises the issue that ambient AI scribes could facilitate more thorough documentation leading to higher-complexity billing codes, creating potential upcoding risks. Organizations must implement governance to ensure coding reflects true clinical complexity, not just documentation volume.

Compliance, Privacy, and the HIPAA Question

Yes, AI Clinical Documentation Can Be HIPAA Compliant

HIPAA compliance is possible—but it depends entirely on how the tool is configured, deployed, and governed.

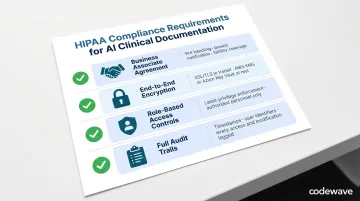

Key requirements include:

- Execute a BAA with every AI vendor that touches PHI — it must cover data handling, breach notification, and liability

- Enforce end-to-end encryption for data in transit (SSL/TLS) and at rest, using key management tools like AWS KMS or Azure Key Vault

- Apply role-based access controls so only authorized personnel can access recordings or AI-generated drafts, with least-privilege enforcement

- Maintain full audit trails — every access event, modification, and transmission logged with timestamps and user identifiers for regulatory readiness

How Audio Data Is Handled

Reputable AI documentation tools follow data minimization principles: recordings are used solely to generate the clinical note, then deleted—often within the same day. There is no secondary use, no long-term retention, and no sharing with third parties outside the BAA scope.

For example, Abridge processes Customer Data (including PHI) solely on behalf of customers under BAAs, never sells personal data, and allows users to delete recordings or accounts at any time. Retention timeframes vary by vendor and are defined in individual BAA terms.

Patient Consent Is Required

Clinicians must inform patients that AI is being used to document the visit and obtain explicit consent before recording. Patients always have the right to decline without any impact on the care they receive.

Research on patient attitudes found that 74.8% of patients were comfortable with ambient AI initially, but comfort dropped to 55.3% when full disclosure about AI features, data storage, and corporate involvement was provided. Patients expressed concerns about self-censoring sensitive information: 35.0% would withhold mental health details, 40.8% sexual health information, and 51.5% details about illicit activity if recorded.

Best practices for consent include:

- Multimodal consent: pre-visit portal notification plus verbal confirmation at the visit

- Separate AI consent from general "consent to treat"

- Involvement of non-clinical staff to reduce power imbalances

- Easy, non-punitive opt-out mechanisms

- Clear disclosure of where audio is sent, who has access, and retention periods

59.2% of patients did not want their data shared with AI vendors, and 76.7% held vendors directly responsible for data security breaches. These numbers make one thing clear: consent isn't a checkbox — it's a trust-building process that requires transparency at every step.

Best Practices for Rolling Out AI Clinical Documentation

Start with Structured Onboarding and Specialty-Specific Training

AI documentation tools perform best when clinicians learn to speak intentionally—verbalizing findings, guiding the model through the encounter, and understanding how different phrasing affects output.

Effective onboarding includes:

- Live walkthroughs demonstrating tool activation, draft review, and EHR integration

- Peer sharing sessions where early adopters demonstrate workflows

- On-demand video guides and quick-reference cards

- Specialty-specific training modules for oncology, primary care, radiology, and other disciplines

Clinicians should understand what the tool captures (verbal dialogue) and what it misses (non-verbal observations, information not discussed aloud). Training should emphasize the importance of stating critical findings explicitly during the encounter.

Integrate with Your Existing EHR from Day One

AI documentation only delivers full value when it pushes structured content directly into EHR fields via HL7 or FHIR APIs—not as a copy-paste step.

The US Core Implementation Guide v9.0.0 defines requirements for exchanging clinical notes via FHIR, supporting 10 "Common Clinical Notes" types including Consultation Notes, Discharge Summaries, History & Physical Notes, and Progress Notes. Systems must support both read and write operations using the DocumentReference resource.

Prioritize tools or custom-built solutions that:

- Support deep EHR integration with your specific platform (Epic, Cerner, Athenahealth, etc.)

- Eliminate manual data transfer steps

- Reduce transcription error risk

- Preserve note formatting and section headers

Getting this right means configuring API connections that reflect how your documentation workflows already operate — not rebuilding workflows around the tool.

Establish a Clear Review-and-Sign Workflow

Define at the organizational level how and when clinicians review AI-generated drafts—before closing the encounter—to maintain accuracy and ensure every note meets documentation standards.

Workflow elements should include:

- Designated review time within the clinical session

- Guidance on what to verify (diagnoses, medication dosages, patient history accuracy)

- Protocols for handling information not verbally stated during the visit

- Escalation paths for recurring AI errors or inaccuracies

At KUMC, clinicians achieved a System Usability Scale score of 76.6 and had 6.91 times higher odds of reporting the workflow was easy to use compared to baseline—demonstrating that well-designed review processes support adoption rather than hinder it.

Implementation Succeeds or Fails on Change Management

Clinician adoption requires addressing concerns about accuracy, privacy, workflow disruption, and professional autonomy.

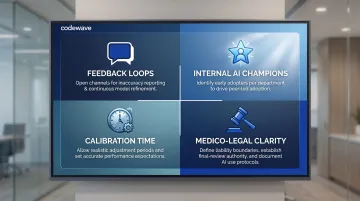

Effective change management strategies include:

- Feedback loops: Open channels for reporting inaccuracies and sharing workflow tips; use that input to refine model outputs and close training gaps

- Internal AI champions: Identify early adopters per department to demonstrate value, troubleshoot issues, and bring skeptical colleagues on board

- Calibration time: AI models improve as they learn individual clinician styles and specialty vocabularies — build in adjustment weeks and set realistic accuracy expectations upfront

- Medico-legal clarity: Define liability for AI-generated content, confirm clinician final-review authority, and document AI use in the medical record

Polarized adoption is common early on. Penn Medicine's Net Promoter Score for their AI documentation tool was 0 — with 35.1% promoters and 35.1% detractors in equal measure. That split typically narrows when time savings are visible and note quality improves through active iteration, not passive deployment.

Frequently Asked Questions

How is AI used in clinical documentation?

AI uses ambient listening to capture patient-clinician conversations, applies NLP to extract clinically relevant content, and uses large language models to generate structured clinical notes (SOAP notes, referral letters, discharge summaries). Clinicians always review and approve AI-generated drafts before saving them to the EHR.

Is clinical notes AI HIPAA compliant?

AI clinical documentation tools can be HIPAA compliant when deployed with proper safeguards—Business Associate Agreements, data encryption, role-based access controls, and minimal audio retention policies. Compliance is determined by how the tool is configured and governed, not by the technology alone.

Can you use AI for clinical notes?

Yes, AI is already widely used for clinical notes through ambient scribe tools. Clinicians review and finalize every draft before it enters the EHR—clinical accuracy and legal accountability remain with the provider throughout.

Do patients need to give consent for AI clinical note-taking?

Yes, patient consent is required before ambient AI recording begins. Best practice combines pre-visit notification with verbal confirmation at the appointment. Patients can always decline without any impact on their care.

How does AI clinical documentation integrate with EHR systems?

Integration typically occurs via HL7 or FHIR APIs, allowing AI-generated structured content to be pushed directly into existing EHR fields. This avoids manual copy-paste steps, reduces transcription errors, and preserves note formatting within the clinician's existing documentation workflow.

What are the current limitations of AI clinical documentation tools?

Tools generate notes based only on what is verbally discussed during the visit—information not mentioned aloud won't appear. Audio quality affects accuracy, multi-speaker sessions can cause attribution errors, and clinician review is always required before notes are finalized. Additionally, models may use layman's terms instead of precise medical language, requiring editing.