Introduction

Bringing a new drug to market costs an average of $879.3 million when accounting for failures and capital costs, with clinical development timelines spanning a median of 8.3 years from first-in-human testing to approval. The approval odds are just as stark: only 8.4% of drugs that enter Phase I trials eventually secure FDA approval, with oncology dropping below 5%. These realities have made clinical research one of the most expensive, slowest, and highest-risk endeavors in modern industry.

AI is already reshaping how trials are designed, how patients are recruited, and how raw data gets turned into decisions. The FDA has received over 500 drug application submissions referencing AI components between 2016 and 2023, signaling growing regulatory acceptance. From protocol drafting to real-time safety monitoring, AI is cutting the unproductive delays that have historically added years to every phase.

TLDR:

- Clinical trials face severe inefficiencies: median 8.3-year timelines, $879.3M average costs, and only 8.4% success rates

- AI accelerates trial design via data simulation, automated protocol drafting, and predictive site selection

- Patient recruitment accelerates via NLP-driven EHR screening, with Human+AI achieving 76.1% accuracy vs. 71.5% human-alone

- Real-time data monitoring and automated safety signal detection enable faster query resolution and cleaner regulatory submissions

- Deployment requires phased rollouts, HIPAA/21 CFR Part 11 compliance, and ongoing model validation

Why Clinical Trials Are Overdue for an AI Overhaul

Traditional clinical trials suffer from structural inefficiencies that compound at every stage. Sequential workflows dominate: site activation drags on for months, recruitment crawls through manual chart reviews, and data flows through fragmented systems that create bottlenecks rather than insights. 41% of activated sites fail to meet enrollment targets, and 45% of trials complete late.

Phase I enrollment timelines increased by an average of five months for trials ending in 2023 compared to prior years.

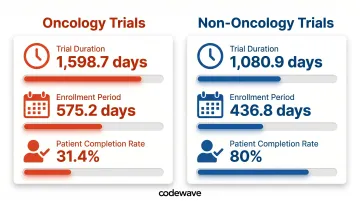

The concept of "white space"—unproductive gaps between trial phases—helps explain why trials take so long. Oncology trials illustrate the problem clearly:

- Mean trial duration: 1,598.7 days for oncology vs. 1,080.9 days for non-oncology (Phase II and III combined)

- Enrollment alone consumes 575.2 days in oncology vs. 436.8 days in non-oncology

- Only 31.4% of oncology patients complete trials vs. 80% in non-oncology

- Randomization rates hover at 67.1%, meaning one-third of screened patients don't qualify

These delays aren't just logistical. Seventy-five percent of protocols require at least one substantial amendment, averaging 3.3 per protocol with 260 days to implement globally. The median direct cost per substantial amendment? $141,000 in Phase II and $535,000 in Phase III.

At that cost and cadence, protocol amendments alone can consume millions per trial before a single efficacy result emerges. AI addresses this directly — compressing white space, flagging protocol risks before amendments become necessary, and improving enrollment precision through predictive patient matching rather than manual chart review.

How AI Is Reshaping Clinical Trial Design and Protocol Development

AI transforms trial design by testing assumptions before a single patient enrolls. Using real-world data from electronic health records, patient registries, and insurance claims, machine learning models simulate trial scenarios and predict feasibility. Researchers can adjust inclusion criteria, enrollment targets, and endpoint definitions in silico, identifying design flaws that would otherwise surface mid-trial as costly amendments.

Key AI applications in trial design include:

- Refined eligibility criteria: Natural language processing scans unstructured EHR data to identify subtle patterns human analysts miss, producing precise patient population definitions and reducing mid-trial protocol changes

- Automated protocol drafting: Large language models synthesize regulatory requirements, therapeutic area precedent, and sponsor objectives to generate draft protocols faster than traditional manual processes

- Strategic site selection: AI analyzes historical site performance, patient demographics, geographic reach, and recruitment track records to identify sites most likely to meet enrollment targets—achieving 58% higher match similarity vs. leading baselines and improving race/ethnicity fairness

These design improvements extend into dosing, where predictive modeling eliminates guesswork before Phase I begins. Physiologically-based pharmacokinetic (PBPK) models—computational representations of drug absorption, distribution, metabolism, and excretion—help the FDA waive certain in vivo studies and inform dose selection. Between 2020 and 2024, 26.5% of NDAs and BLAs submitted PBPK models as pivotal evidence, supporting labeling decisions for drugs like seladelpar, deuruxolitinib, and vorasidenib.

The compounding effect is measurable: fewer protocol amendments, faster site activation, and enrollment forecasts that hold up through the full trial lifecycle. Teams that previously spent months deliberating over design variables now resolve them in days — with documented evidence backing each decision.

AI-Powered Patient Recruitment: Faster, More Diverse Trials

Patient recruitment is the single biggest bottleneck in clinical trials. AI addresses this by automating the most time-intensive step: patient screening. NLP applied to unstructured EHR data—physician notes, lab results, patient histories—flags potentially eligible candidates in minutes. A randomized noninferiority study found Human+AI chart-level accuracy reached 76.1% vs. 71.5% for human-alone review, with AI-alone achieving 59.9%. Efficiency remained stable at approximately 37-38 minutes per chart, meaning AI improved precision without slowing the process.

Addressing the diversity gap

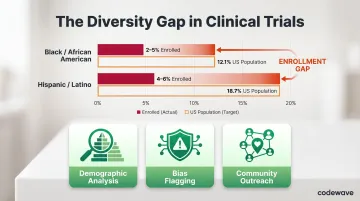

Clinical trials have historically excluded women, people of color, and lower-income populations. The data is stark:

- Women represented 40-72% of participants in trials for new molecular entities and biologics (2015-2020), with severe intersectional gaps—only 3.2% of women enrolled in global cardiovascular trials were Black or African American

- In oncology trials (2017-2020), Black/African American participants made up just 2-5% and Hispanic/Latino participants 4-6%, compared to US population shares of 12.1% and 18.7% respectively

AI can identify and engage underrepresented groups by analyzing demographic and disease prevalence data. Algorithms can detect and flag bias in recruitment strategies, ensuring outreach extends beyond traditional academic centers into community health systems and underserved geographies.

Improving retention and adherence

Recruiting diverse participants is only half the challenge — keeping them enrolled is the other. AI-driven engagement tools—personalized content, chatbots, voice assistants, and smart reminders—help predict and prevent patient dropout. Digital biomarkers and wearable data flag non-adherence in real time, allowing coordinators to intervene before participants disengage.

Industry reports estimate average dropout rates around 30%, with average recruitment costs per participant at $6,500. That makes retention both a financial and scientific priority.

Decentralized clinical trials (DCTs) expand reach to rural and underserved communities. The FDA's 2024 Final Guidance on Conducting Clinical Trials With Decentralized Elements provides recommendations for telehealth, in-home visits, and local healthcare provider visits. Evidence from remote COVID-19 trials shows that decentralized designs included more racial and ethnic minorities and geographically diverse participants, though concerns about the digital divide remain.

AI ties these distributed systems together—handling remote data collection, real-time participant monitoring, and real-world evidence integration across sites without requiring centralized infrastructure.

From Raw Data to Actionable Insights: AI in Data Analysis and Safety Monitoring

Data management is where AI delivers some of its clearest operational wins. AI automates the most time-consuming tasks: data entry, cleaning, deduplication, and imputation of missing values — directly improving data quality and compressing timelines.

Purpose-built AI data pipelines in healthcare contexts can deliver 3X faster data processing and up to 90% fewer data errors, turning what was once a months-long manual effort into a continuous, automated workflow.

Real-time, continuous monitoring replaces periodic human review. AI ingests data from multiple sources — EHRs, wearables, patient-reported outcomes — enabling earlier anomaly detection and faster query resolution. For safety monitoring, catching a signal one week earlier can directly change a patient outcome.

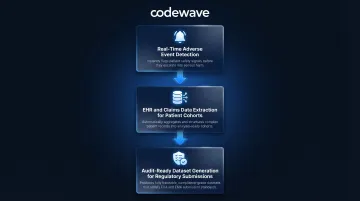

AI-enabled safety monitoring includes:

- Detects clusters of adverse events in real time, predicts escalations before they become serious, and automates pharmacovigilance reporting (ADR documentation)

- Extracts and structures data from EHRs, medical claims, and disease registries to build patient cohorts and EHR phenotypes that support enrichment strategies

- Produces datasets that are complete, consistent, and audit-ready — accelerating regulatory submissions and reducing back-and-forth with agencies

The FDA views real-time AI safety monitoring as one of the most promising clinical applications. The agency's discussion paper catalogs AI uses in postmarket safety surveillance, including ICSR processing, evaluation, and automated case submission—all areas where speed and accuracy directly impact patient safety.

The Real Challenges: Where AI Falls Short in Clinical Research

AI's promise comes with significant limitations. The most fundamental: AI models are only as good as their training data. Medical datasets are often incomplete, unrepresentative, or skewed toward certain demographics. The FDA emphasizes that data must be "fit for purpose"—relevant and appropriate to the intended use and population—but this standard is difficult to consistently meet. Demographic bias undermines generalizability: an algorithm trained predominantly on data from white male patients may perform poorly when applied to women or minority populations.

The explainability challenge remains a regulatory and trust barrier. Complex AI models—especially deep learning systems—can arrive at recommendations without transparent reasoning. This "black box" problem raises concerns around regulatory acceptance, liability, and clinician trust.

Regulators handle it differently. The EMA prefers interpretable models, permitting black box approaches only when justified by superior performance and supported by explainability metrics. The FDA focuses on model credibility for the intended use rather than prohibiting black boxes outright—but the tension between performance and transparency is real.

Organizational and human challenges are equally critical:

- Change management: Workflows must be restructured, staff retrained, and institutional resistance addressed before AI can deliver value

- Workforce upskilling: Teams need enough AI literacy to validate model outputs, catch errors, and translate findings into decisions that hold up under regulatory scrutiny

- Irreplaceable human roles: Formulating novel hypotheses, interpreting ambiguous clinical findings, and sustaining participant trust over a multi-year trial all require capabilities no current model replicates

The practical implication: organizations that treat AI as a drop-in replacement for researcher expertise tend to encounter these limitations the hard way. Those that integrate AI as a decision-support layer—with human oversight built in from the start—are better positioned to navigate both the regulatory requirements and the operational realities of modern trial design.

Building an AI-Ready Clinical Research Pipeline

Building an AI-ready pipeline isn't a single deployment — it's a staged process that matches tool complexity to organizational readiness. Start with a phased implementation approach: deploy AI first in low-risk, high-value use cases (automated data cleaning, patient screening, site selection) before expanding to higher-stakes applications like protocol design or real-time safety monitoring. Match each rollout phase to the relevant regulatory requirements:

- FDA's risk-based approach: The January 2025 Draft Guidance on Considerations for the Use of Artificial Intelligence emphasizes risk-based credibility assessment

- 21 CFR Part 11: Applies to electronic records and signatures in FDA-regulated contexts; enforced controls include access restrictions, system validation, documentation, and training

- HIPAA: Governs patient data privacy and security; any AI pipeline handling protected health information must meet encryption, access control, audit log, and third-party integration requirements

Choose AI partners with deep clinical domain expertise rather than generic horizontal platforms. Clinical trials carry unique compliance, data sensitivity, and validation requirements that general-purpose AI tools aren't built to handle. Partners who understand GCP guidelines, regulatory submission standards, and trial data workflows will compress your implementation timeline — and reduce the risk of costly mid-trial course corrections.