Introduction

Organizations now run an average of 200 AI tools across their operations, yet only 28% of employees know how to use them effectively. The tools are there: LLMs, automation bots, analytics engines, recommendation systems. What's missing is the connective layer that makes them work together.

The scaling problem is acute. Nearly two-thirds of organizations haven't begun scaling AI enterprise-wide, and 95% of AI pilots fail to deliver measurable business impact. The core challenge isn't AI capability; it's AI coordination. Without orchestration infrastructure, enterprises face fragmented deployments, manual handoffs between systems, and no path from pilot to production.

This guide gives decision-makers a practical path: from understanding what AI orchestration actually is, to implementing it in a way that turns siloed AI spend into measurable business results.

TLDR:

- AI orchestration coordinates multiple models, agents, and data pipelines into end-to-end workflows without constant human intervention

- It operates through three pillars: integration, automation, and governance

- Gartner projects 40% of enterprise apps will embed AI agents by end of 2026 — up from just 5% today

- Proven outcomes include 70% faster processing, 40% cost reductions, and measurable compliance improvements

- Start narrow with one workflow, instrument everything, and establish governance before scaling

What Is AI Orchestration?

AI orchestration is the coordination layer that manages how multiple AI models, agents, data pipelines, and tools interact. It determines what runs, in what sequence, with what data, and how failures are handled — without constant human intervention.

Think of it like a conductor: they don't play any instrument, but they govern when each musician enters and how the whole performance holds together. An AI orchestration system works the same way — it doesn't process data itself. It governs sequencing, shared context, state management, and exception handling across the entire system.

What AI Orchestration Is NOT

Several adjacent concepts get conflated with orchestration — here's where the lines fall:

- Not a single AI model — orchestration coordinates models; it doesn't replace them

- Not a basic automation rule engine — traditional workflow tools follow rigid if-then logic; orchestration adds context-aware decision-making that adapts to variability

- Not MLOps — MLOps manages individual model lifecycles (training, deployment, monitoring); orchestration operates above that layer, coordinating models, agents, APIs, and human checkpoints across complete business workflows

The 2026 Context: From Niche to Foundational

Those distinctions matter more now than they did two years ago. Orchestration has shifted from a DevOps concern to a foundational architecture decision — driven by the rise of agentic AI, multi-agent frameworks, and interoperability standards like Anthropic's Model Context Protocol (MCP).

Gartner predicts that 40% of enterprise apps will feature AI agents by end of 2026, up from less than 5% in 2025. Without orchestration infrastructure, this surge will amplify fragmentation rather than deliver value.

Forrester formalized this shift in September 2025 by defining Adaptive Process Orchestration (APO) as the convergence of AI agents, nondeterministic control flows, and traditional automation—explicitly stating that "deterministic workflow engines, RPA bots, and DPA tools are not powerful enough to implement the complexities required for autonomous operations."

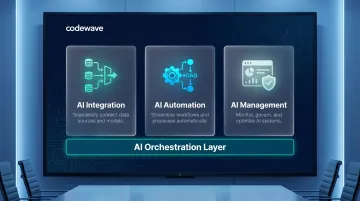

How AI Orchestration Works: The Three Core Pillars

Every orchestration system operates through three pillars: Integration, Automation, and Management. Each one addresses a distinct challenge — how data moves, how tasks execute, and how the whole system stays under control.

AI Integration

AI integration connects diverse AI models, data sources, APIs, and tools so information flows without manual handoffs.

Key mechanisms:

- Data pipelines move and transform data between systems, ensuring each component receives inputs in the correct format

- API and function calling links models to external tools, databases, and business logic

- RAG (Retrieval-Augmented Generation) connects LLMs to proprietary knowledge bases, so models reference authoritative internal data without retraining

Integration in action: A sentiment analysis model processes customer feedback and passes negative results to a classification model. The classifier routes categorized issues to a summarization model, which generates executive alerts. All of this triggers automatically from a single inbound message—no human relay required.

Once data moves reliably between systems, the next challenge is execution — running the right tasks in the right order without human intervention.

AI Automation

AI automation handles the execution of AI tasks and decisions without human oversight, using intelligent dependency management and quality gates.

Core components:

- DAG-based (Directed Acyclic Graph) dependency management ensures tasks execute in the correct sequence, with downstream processes waiting for upstream results

- Event-driven triggers launch workflows automatically when conditions are met (e.g., new document uploaded, threshold breached, time-based schedule)

- Data quality gates halt workflows when anomalies or schema drift are detected, preventing bad data from propagating

AI automation vs. rule-based automation: Traditional automation follows fixed scripts. AI automation adapts to context, learns from outcomes, and handles dynamic, variable real-world workflows—making it suitable for environments where inputs and conditions change unpredictably.

Reliable execution, though, only holds value if the system remains observable, correctable, and compliant over time. That's where management comes in.

AI Management

AI management covers monitoring, governance, and lifecycle control—ensuring AI systems remain reliable, compliant, and auditable at scale.

Critical capabilities:

- Real-time performance tracking (latency, accuracy, throughput)

- Version control for models and agents

- Audit logging of every decision and action

- Role-based access control limiting who can deploy or modify workflows

- Human-in-the-loop checkpoints triggered by confidence thresholds

- Retry and error-handling patterns that prevent transient failures from cascading

Why this matters for regulated industries: In healthcare, finance, and insurance, centralized governance ensures all AI actions are traceable and auditable. For regulated sectors, this isn't a nice-to-have. The NIST AI Risk Management Framework explicitly requires mechanisms to "supersede, disengage, or deactivate AI systems" showing inconsistent outcomes. And with 49% of AI outputs still requiring manual expert review before production use, governance infrastructure is what separates a demo from a deployable system.

AI Orchestration vs. Related Concepts

Many terms overlap. Mixing them up leads to buying the wrong tools or underbuilding the wrong layer.

AI Orchestration vs. AI Agents

AI agents are autonomous units that reason and act on specific tasks—like a customer support chatbot, fraud detection model, or document classifier.

AI orchestration is the infrastructure layer that coordinates multiple agents, determining their sequence and dependencies, sharing context between them, and defining what happens when one fails.

Put simply: agents do the work; orchestration decides which agent does what, in what order, and what to do when something breaks.

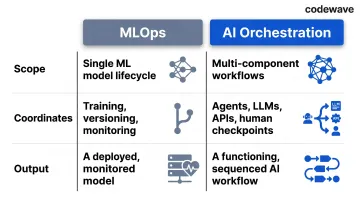

AI Orchestration vs. ML Orchestration (MLOps)

MLOps manages the lifecycle of individual machine learning models: training, validation, deployment, monitoring, and versioning.

AI orchestration operates at a higher level, coordinating not just ML models but also LLMs, rule-based systems, RPA tools, APIs, and human-in-the-loop checkpoints across entire workflows.

MLOps is scoped to a single model's health. AI orchestration is scoped to the end-to-end workflow those models participate in — including everything that isn't a model at all.

At a glance:

| MLOps | AI Orchestration | |

|---|---|---|

| Scope | Single ML model lifecycle | Multi-component workflows |

| Coordinates | Training, versioning, monitoring | Agents, LLMs, APIs, human checkpoints |

| Output | A deployed, monitored model | A functioning, sequenced AI workflow |